Google Glass Will Disrupt Social Media With Too Much Data

We’ve all seen the videos. Skydiving, sunsets with your girlfriend, lunches with friends (I had an amazing banana froyo shaped like Pikachu at this new place on Oak Lane!). With Google Glass, these otherwise mundane daily activities can actually become interesting, interactive and even fun. You can check in at places, take pictures and share them effortlessly, instantly and — more importantly — frequently. Kevin Systrom and Mike Krieger over at Instagram are probably jumping up and down with excitement right now — but they shouldn’t be, and here’s why.

At this point, there is no existing and widely used social network which can effectively manage the volume of photos that will result from accessible and wearable social tech.

There is a glaring problem with current social networks, specifically those that deal in photos, which is demonstrated by this funny picture I saw the other day comparing a teenage girl with Neil Armstrong. She went to the bathroom and took 37 pictures of herself in the mirror. He went to the moon and took five pictures of the universe. Over time, people change their habits because of the technology they have available to them and because of changes in social mores.

My Instagram feed is busy enough now at a time when, in order to take a picture, people still need to actively push a button on a device that otherwise resides in their pockets. When these frequent photographers get their hands (or heads) on a device that enables them to constantly share pictures of everything that interests them without them having to lift a finger — well, you see the problem.

At this point, there is no existing and widely used social network which effectively presents the information that will come along with those pictures.

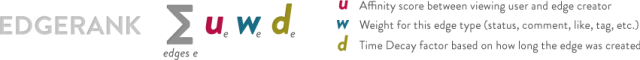

When there is too much information, aggregators and hosts look for ways of slowing down the stream. Take Facebook’s use of Edge Rank, for example, as a way to fight the flow of information. You don’t see every post from each of your friends when you look at your Facebook News Feed. Instead, Facebook assigns a rank to each item, or “edge,” which would be visible to you and displays only a fraction of them.

However, using this form of social sieve comes with a cost: Information gets buried quickly. The assumption that “if it’s old, it’s not relevant” is not accurate. Information about your significant other doesn’t lose value over time. What your room or house or car looks like does not lose actuality over time. Who wants an “edge” (if we are to use Facebook parlance) — on which they spent time and energy — to simply disappear, buried under the mountains of data generated every day?

The next generation of social networks will enable users to easily digest and access large amounts of information.

I say next generation, and not new iteration, because existing social networks would need to rebuild themselves from scratch in order to do this, since it’s such a drastic change in how social information is curated. This is not an issue of redesigning the flow of a feed, it requires looking at information from a different angle. Structures suitable for social have existed among us for a while in different forms. For example, without Spotify’s or Netflix’s structure of genres, all you would find is a long list of recent tracks and films.

Social networks need to embrace pictures as primary user data points.

Technology like Google Glass will enable and encourage users to take many more pictures than they do now. Developers need to take this into account when building the next-generation social networks. Pictures are not momentary events which lose value over time. Instead, we value older photographs over newer ones. Why hide them behind a wall of recent information which some algorithm deemed more “relevant”? The next-generation social network will need to understand and support this. Even someone without $1,500 glasses can see that this is what we all should be striving toward.

Jared Barol connects We Heart Pics, a photo-based social network, with the outside world. You can reach him at jared@weheartpics.com or by following @jbarol on Twitter.